Machine learning

Here are just a few applications we have developed in our lab.

Machine Learning is a rapid developing area of AI. In recent years, many awe-inspiring Machine Learning Applications have been developed by academia as well as industry. Here are just a few applications we have developed in our lab.

Autonomous Driving

State of art of Autonomous Driving

The Society of Automotive Engineers (SAE) has designated six levels of automated driving in the new international standard J3016. In the first three levels the human driver monitors the driving environment, while in the last three levels, the automated driving system monitors the driving environment (Fig. 1.1). The six levels are defined in the following sub-sections.

Fig. 1. Stages in autonomous driving

Human driver monitoring

In levels 0-2, the human driver is responsible for monitoring the driving environment. The levels of autonomy in this group are described below:

Level 0

The human driver controls the steering, acceleration and braking of the car. No automation in included in the act of driving.

Level 1

This is a driver-assistance level. Most of the basic driving functions are still controlled by the human driver, but a specific function can be done automatically by the car.

Level 2

At least one driver assistance system of both steering and acceleration/ deceleration using information about the driving environment is automated (e.g. cruise control). The human driver is not engaged in physically operating the vehicle controls. The driver may keep his or her hands off the steering wheel, and foot off pedal at the same time. However, the driver must still always be ready to take control of the vehicle when judged necessary (for example, in emergency).

Automated driver monitoring

In levels 3-5, the automated driving system is responsible for monitoring the driving environment.

Level 3

Drivers are still necessary in level 3 cars, but are able to completely shift "safety-critical functions" to the vehicle, under certain traffic or environmental conditions. It means that the driver is still present and will intervene if necessary, but is not required to monitor the situation in the same way it does for the previous levels.

Level 4

This is what is meant by fully autonomous. Level 4 vehicles are designed to perform all safety-critical driving functions and monitor roadway conditions for an entire trip. However, it is important to note that this is limited to the operational design domain of the vehicle - meaning it does not cover every driving scenario.

Level 5

This refers to a fully-autonomous system that expects the vehicle's performance to equal that of a human driver, in every driving scenario - including extreme environments like dirt roads that are unlikely to be navigated by driverless vehicles in the near future.

Aims of this study

Developing software systems for self-driving cars is a hotbed of research these days. Many companies overseas and in Japan, in particular, are spending enormous funds in developing such systems. The Japanese government is determined to introduce fully-functioning self-driving cars for the Tokyo Olympics in 2020. However, most of the research relies on highly specialized, extremely complex and costly equipment to achieve the goal of self-driving. In this research, the applicant proposes a very simple and cost effective method of “Soft computational machine learning” to implement self-driving. Machine learning techniques and algorithms have made great progress in recent years. These very techniques and algorithms can be directly applied to achieve great accuracy in the various functions of self-driving like lane-keeping, obstacle avoidance, pedestrian detection, signal detection and recognition, traffic-signs recognition, etc.

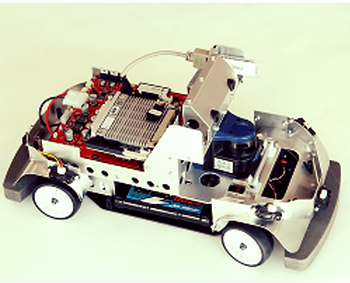

Most of the automakers fit the equipment onto the cars and test their equipment in real-life driving. This is risky and costly. Our originality is creating such a real-life atmosphere in the laboratory. We control the steering, acceleration and brakes. The robocar learns from our driving actions.

Fig. 2. Our original idea to teach the robocar to drive by itself

Self-driving Modules

Fig. 3. Self-driving Modules

Laboratory set-up

First of all, we have achieved over 96% accuracy in object-recognition, lane-keeping, signal recognition and traffic-signs recognition in our simulation studies and the results are published in international conference proceedings. The first phase of developing mature algorithms to learn various aspects of autonomous driving is nearing completion. The second phase consists in training the robocar to self-drive by implementing the learning algorithms developed in the simulation environment. The final research project will consist of building a driving circuit on a larger space. The lanes will be demarcated and plastic models of buildings, trees, lamp-posts, etc. will placed on the floor to create a real-life driving environment. There will be signals and pedestrian crossings. Small humanoid robots will act as pedestrians imitating human behavior while crossing streets.

Fig. 4. Driving circuit for self-driving cars in the laboratory

Driving Modules

Lane keeping

The basic thing in any road driving is lane keeping. Most self-driving cars rely on special infrastructure by the roadside to steer along the way. However, our research concentrates on making the vehicle learn to drive based only on the visual information picked up by the car camera.

Traffic signals

The next challenge in self-driving is the recognition of signals. The scene picked up by the front camera of the car is analyzed to pick up blobs of light. These are then classified as signals or non-signals; finally the color of the identified signals is extracted. We have obtained more than 95% accuracy in the identification of signals in the standard datasets.

Traffic signs

This offers a great challenge for most image recognition algorithms, given the complexity and variety of traffic signs. We have used several renowned algorithms and convolutional neural networks to achieve a very high accuracy in training the autonomous driving system to identify the traffic signs placed along the roadside.

Obstacle avoidance

True, obstacles are a part of life. There can be no true driving without avoiding obstacles. Our Convolutional Neural Networks have learnt the art of detecting obstacles on the road relying mostly on visual information. They are also capable of estimating the level of danger posed by a given obstacle, relying on fuzzy calculations.

Gonken driving school

Before we run the RoboCar on the test driving course, we test the performance of the learning agent in the simulation environment. You can test your driving skills in this virtual environment. Along with our program, you will need the gamer's steering and pedal controls to enjoy the fun!